At BrightonSEO I talked about Third-Party tags, their impact on site-speed, and some of the approaches I encourage my clients to use to reduce this impact.

As it’s hard to fit everything into a twenty minute talk, this post expands on the talk and includes some of the points I didn’t have time to cover.

From Analytics to Advertising, Reviews to Recommendations, and more, it’s common for sites to rely on Third-Party tags to provide some of their key features.

But there’s also a tension between the value tags bring and the privacy, security and speed costs they impose.

I’m focusing on speed but if you want to learn more about the other aspects, Laura Kalbag and Wolfe Christl often cover the privacy concerns, and Scott Helme sometimes covers the security issues.

What does a Tag Cost?

When I’m helping clients to improve the speed of their sites one of my first steps is to test the site with and without tags using WebPageTest (you can also use this approach to test the impact of individual tags).

This gives me an indication of what gains might be made by optimising the implementation of tags.

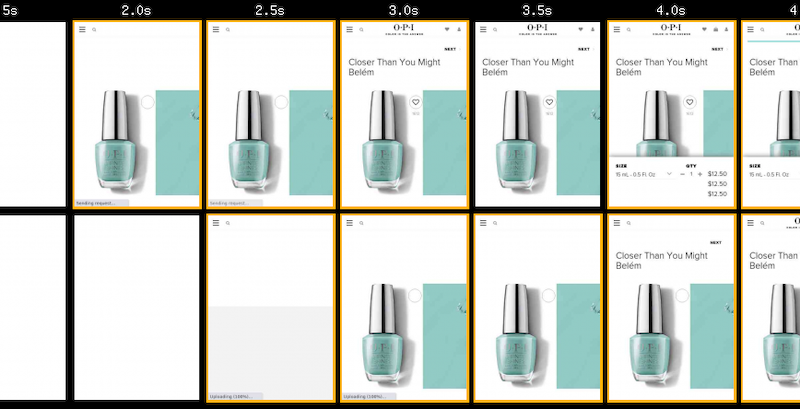

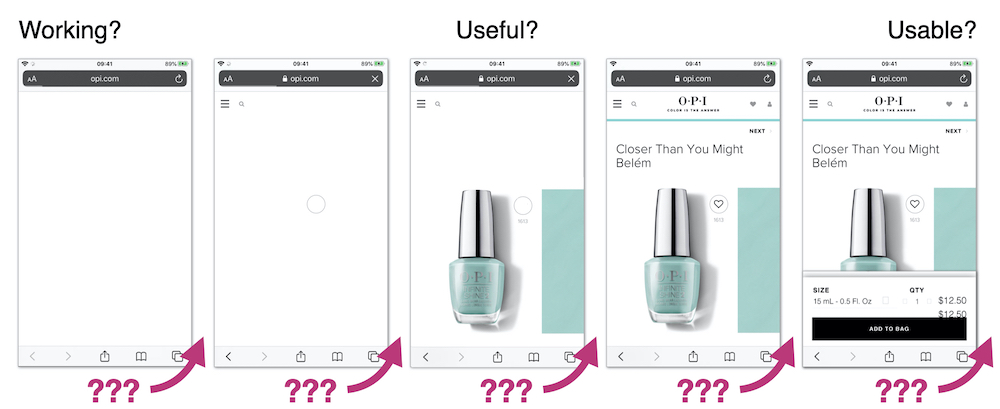

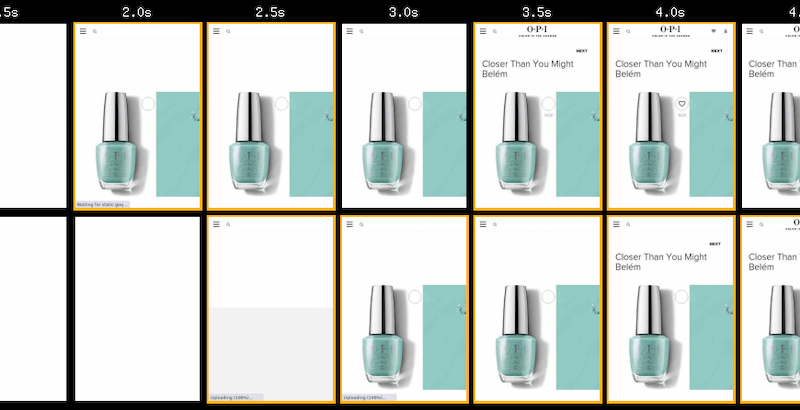

Using OPI, the nail varnish company as an example… when third-party tags are removed their pages get faster – on product pages the key image appears about a second sooner, and other content such as the heading text, and brand logo also appear sooner.

OPI with 3rd-Party Tags blocked (top), and as loaded normally (bottom)

OPI with 3rd-Party Tags blocked (top), and as loaded normally (bottom)

How Tags Impact Site-Speed

There are two ways tags impact site-speed – they compete for network bandwidth and processing time on visitors’ devices, and depending on how they’re implemented they can delay HTML parsing

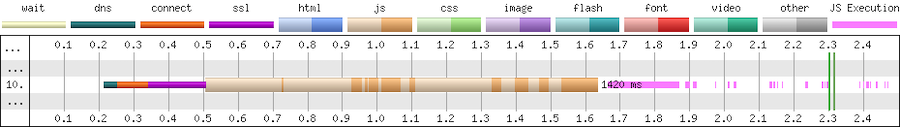

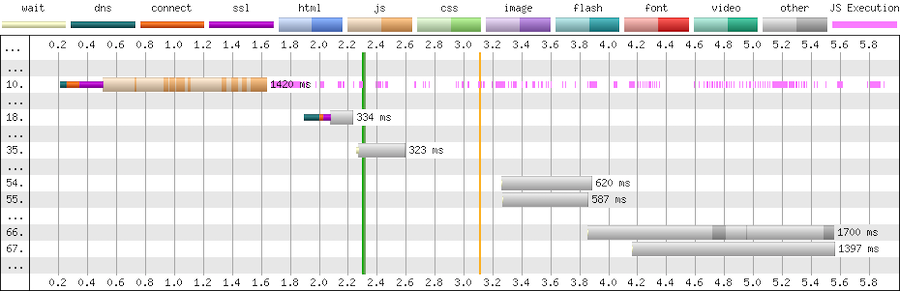

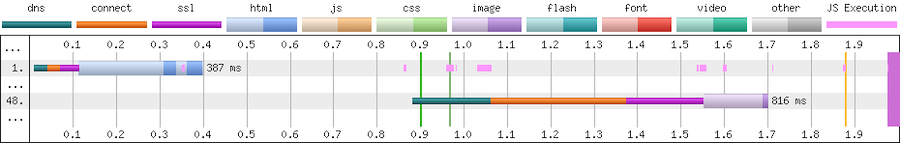

This partial waterfall from WebPageTest illustrates the costs for fetching and executing the script.

First there’s a 300ms delay while the browser connects to the third-party (cyan, orange and magenta segments), then the download of the script takes a further 1,100ms (beige segment), and then the script execution takes a further ~200ms (pink segments on the right)

The dark sections of the beige line are where data is being received, and the light section where there’s no data – these extended light sections are an indication that this tag is competing for the network or the server it’s hosted on is slow.

If the script is cacheable then the cost of the network connection and download should only affect the first time it’s loaded in a session but the cost of the execution time will apply to all pages that include it.

Tags can also trigger further downloads, sometimes these may be calls to an API, other times they may be adding extra scripts, stylesheets etc to the page.

Expanding the above example we can see it makes many further calls (the chart only shows a few) to an API (grey bars). These API calls are likely to be made on every page, and again the light areas in the grey bars indicate either network contention or a slow server.So

Tags are generally implemented as scripts, and a second aspect to consider is what effect they have on blocking HTML parsing.

By default script elements (such as the one below) stop the browser from parsing HTML until the script has been fetched and has finished running.

<script src="https://cdn.example.com/third-party-tag.js"></script>

We want to avoid implementations that use blocking tags as much as possible due to the delay they cause, which if the third-party isn’t reachable for some reason can be over 30 seconds.

There are a few ways to make scripts non-blocking.

Adding the async attribute tells the browser to not to wait while the script is fetched but will block the browser when the script executes.

<script src="https://cdn.example.com/third-party-tag.js" async></script>

Non-blocking scripts can also be added via a small inline script snippet that inserts another script into the page. This example is for Google Tag Manager, but it’s a very common pattern.

<script>

(function(w, d, s, l, i) {

w[l] = w[l] || [];

w[l].push({

'gtm.start': new Date().getTime(),

event: 'gtm.js'

});

var f = d.getElementsByTagName(s)[0],

j = d.createElement(s),

dl = l != 'dataLayer' ? '&l=' + l : '';

j.async = true;

j.src = 'https://www.googletagmanager.com/gtm.js?id=' + i + dl;

f.parentNode.insertBefore(j, f);

})(window, document, 'script', 'dataLayer', 'GTM-XXXX');

</script>

Another form of non-blocking scripts use the defer attribute to instruct the browser that it doesn’t need to wait for the script to download but that it should only execute the script when all the HTML has been parsed.

<script src="https://cdn.example.com/third-party-tag.js" defer></script>

Avoid document.write as it stalls the browser – the browser can’t discover the external script until document.write executes, and then the browser must wait for the script to be downloaded and run before it can carry on parsing the HTML.

document.write(‘<script src="https://cdn.example.com/third-party-tag.js"></script>’);

Tag Managers generally inject tags using a non-blocking approach but occasionally I come across one that still uses document.write.

Reducing the Impact of Tags

Our goal is to minimise the impact tags have on visitors’ experience, while still retaining the value those tags provide.

Catalogue the Tags that are Currently Deployed

There are a few ways to catalogue the tags on a page… from inspecting the contents of a tag manager container, through free tools like WebPageTest to commercial tools such as Ghostery and ObservePoint.

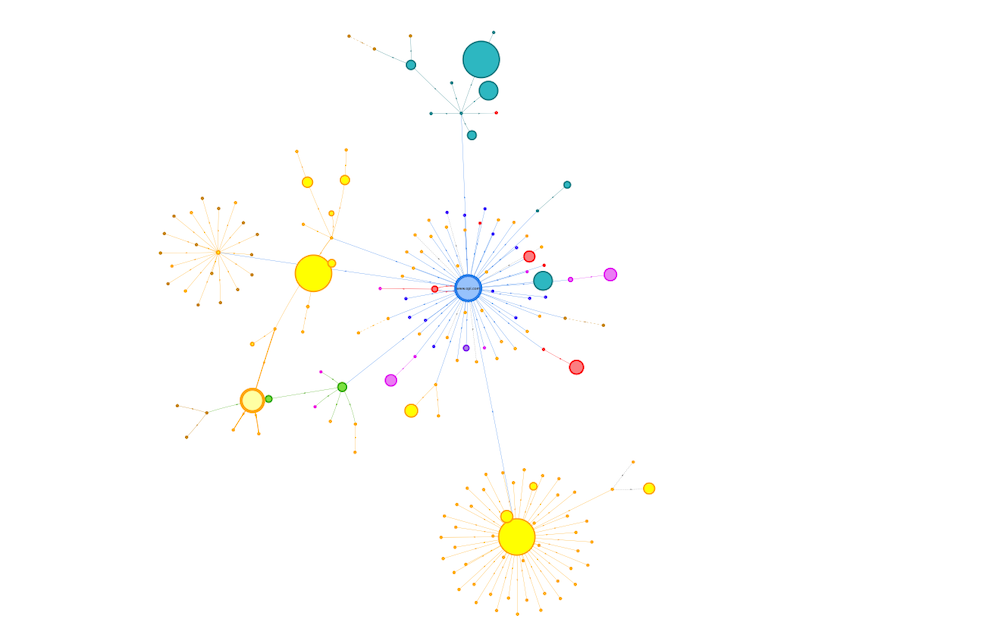

One of my favourite places to start is Simon Hearne’s Request Map – it’s built on top of WebPageTest and visualises the third-parties on a page along with details on their size, type and what triggered their load.

Request Map for OPI Product Page

Request Map for OPI Product Page

I often create these for different types of pages across a site and also combine the WebPageTest data to build a cross site view.

Consolidate by Identifying Tags that can be Removed

Once I’ve got an idea of what’s on the page I start analysing and asking questions.

Initially, I aim to identify services where the subscription has lapsed, no-one is using it, or where there’s more than one product providing similar features.

When a subscription expires, some providers helpfully return an error e.g. HTTP 403, others serve an empty script but many carry on serving their full script, so sometimes it can take a bit of digging to identify them.

A few years ago (pre-GDPR) one of the European airlines audited their tags and found that subscriptions had expired for around a third of the tags on their pages, and they couldn’t find anyone who used some of the others.

So immediately with a bit of tidying up they were able to reduce the impact.

Occasionally I come across tags from different vendors that provide similar features, for example session replay services like Mouseflow and HotJar, or analytics services such as Google and Adobe.

Reducing this duplication and consolidating on a single choice is better than having multiple solutions (for both cost and visitor experience) but sometimes there can be good reasons to keep more than one analytics service but try to deduplicate where possible,

Another question to ask at this point is whether the tag is actually needed on the current page, for example I’ve seen the Google Maps script included in every page across a site when there was only a map on one or two pages.

Reduce the Cost of Remaining Tags

When the unused tags have been removed and the duplicates consolidated I start exploring ways to reduce the impact of the remaining tags.

Initially I’ll identify which tags might be replaced with smaller, faster alternatives, and then how we can reduce the cost of the remaining ones.

Lighter Weight Alternatives

Typical wins are replacing the standard embedded YouTube player with a version that delays loading the player script until a visitor interacts with the video.

Or replacing social sharing buttons with lightweight JavaScript free versions or even removing them entirely and just relying on visitors using the sharing features built into their browser.

Switching providers in another option worth considering – one of my clients switched their chat provider from ZenDesk to Olark as it was half the size!

Experimentation Frameworks and Tag Managers

The impact of AB / MV Testing services and Tag Managers can often be reduced by simplifying their work.

The size of tags for testing services often depends on the number of experiments included, number of visitor cohorts, page URLs, sites etc. and reducing these can reduce both the download size and the time it takes to execute the script in the browser.

Out of data experiments or A/A tests that are being used as workarounds for CMS issues or development backlogs, and experiments for different sites (staging and live etc.) in the same tag are some of the aspects I look for first.

Similarly the more tags and rules there are in a tag container the larger it’s going to be, and so the greater its impact on the visitors experience.

A client I worked with last year was using a single container for each geographical region and it contained the tags for every brand site in that region. All these tags were being shipped to every visitor even when most of them wouldn’t be used and the size of the container had a noticeable impact on visitors' experience.

I encouraged the client to switch to one container per brand to reduce its size and improve visitor experience. The challenge for them was that this increased the number of tag containers they needed to manage – there’s always a complexity tradeoff somewhere!

Tracking Pixel and Server-Side Tag Management

Barry Pollard’s approach of replacing some tags with their fallback tracking pixel instead of using the full tag, is an interesting idea that I’ve not tried with any clients yet.

Server-side tag management helps in a similar way, as the tag manager collates the events and distributes them to other services without including the scripts from those services directly in the page.

Libraries from Public CDNs

And although they’re not tags I also examine what 3rd-party resources – scripts, stylesheets and fonts such as jQuery, FontAwesome etc. – are being loaded from public CDNs such as jsdelivr or ajax.googleapis.com etc., with the aim of self-hosting them.

Self-hosting allows for more efficient use of network connections, especially if a site is already using a CDN and HTTP/2.

Choreograph when Tags Load

In the performance world we often refer to page load as a journey with milestones along the way… is anything happening, when does a page become useful or usable?

Third-party tags should fit into that journey…

Which ones must be loaded before the page can start displaying content to a visitor, which ones can be delayed until later, and what about the ‘bit in the middle’?

The point at which a tag needs to be loaded depends on what features it provides and when that feature is required.

But too often I see tag managers injecting tags as soon as possible.

Generally I try to delay the load of tags for as long as practical but it depends on the tag’s purpose – is it just collecting data for business use, does it affect or provide content and features that the visitor sees and when does the visitor need to see them?

Before Useful

Tags that are loaded early in the page have an outsized impact on visitor experience, often they’re included in the <head>, and browsers tend to prioritise resources included there.

The key question to answer is “does this tag need to be loaded before the visitor can see content, and what’s the impact if it’s loaded later?”

AB / MV Testing, Tag Managers, Personalisation tools and Analytics are some of the tags that are often loaded in this phase – I tend to leave them embedded in the page, but aim to have as few as possible and slim them down to minimise their impact.

Testing / experimentation tools often have a large impact in this phase.

They tend to take one of two approaches – block the page from rendering until the tag has loaded, or load non-blocking and hide the page using an anti-flicker snippet – and both of these have challenges.

Choosing a blocking approach stops the parsing of HTML until the script has downloaded and been executed.

With the non-blocking approach, anti-flicker scripts hide the page until either the testing framework has executed or a timeout value is exceeded (3 seconds in the case of this example for Adobe Target):

<script>

//prehiding snippet for Adobe Target with asynchronous Launch deployment

(function(g, b, d, f) {

(function(a, c, d) {

if(a) {

var e = b.createElement("style");

e.id = c;

e.innerHTML = d;a.appendChild(e)

}

})(b.getElementsByTagName("head")[0], "at-body-style", d);

setTimeout(function() {

var a=b.getElementsByTagName("head")[0];

if(a) {

var c = b.getElementById("at-body-style");

c && a.removeChild(c)

}

}, f)

})(window, document, "body {opacity: 0 !important}", 3E3);

</script>

I’m not a fan of snippets that hide the page – visitors are familiar with pages loading incrementally, and hiding the page interferes with their perception of speed.

Some will argue anti-flicker snippets avoid a poor visitor experience, and that if visitors see experiments making significant changes to the page it may alter their behaviour.

In the example above, even if the experimentation framework hasn’t finished its work the page is going to be revealed after 3 seconds, so visitors having slow experiences will potentially still see changes as they’re applied anyway.

I’d advise experimenting with whether you really need an anti-flicker snippet, reducing the timeout values, and also measuring the delay the anti-flicker snippet introduces (Simo Ahava has a post on how to do measure it for Google Optimize)

There are methods to reduce the impact of blocking testing frameworks too.

Casper removed the network connection time by self-hosted their Optimizely script and reduced the delay before content appeared by 1.7s

As an alternative to self-hosting, Optimizely provides instructions on how to proxy their tag through your own CDN, but proxying can bring security concerns, and you will need additional CDN configuration such as stripping the cookies you don’t want to forward to a third-party.

Testing frameworks are big bundles of JavaScript that need to be downloaded and executed so simplifying them will reduce their impact.

But ideally the work of large or blocking tags should be completed before the page reaches the visitor so explore how you can implement experiments server-side or CDN-side so they execute before the page is delivered.

Analytics / Attribution Fallbacks

One last thing to watch out for is fallbacks for visitors who have JavaScript disabled – many attribution tags use an image or iframe fallback wrapped in a noscript element.

The fallback for Bing Ads for attribution is one example:

<noscript>

<img src="https://bat.bing.com/action/0?ti=xxxxxxx&Ver=2" height="0" width="0" style="display:none; visibility: hidden;"/>

</noscript>

These fallbacks should be placed in the body of the page as img and iframe aren’t valid elements in the head.

After Usable

Some tags provide features that aren’t much use until a visitor can interact with the page – chat and feedback widgets, session replay services etc. – so I tend to delay their load.

Often these tags are loaded much earlier than needed and their download competes for the network often delaying far more important resources such as product images.

I’ll delay the addition of these types of tags using the Window Loaded Adobe Launch event / GTM trigger. Delaying them reduces competition for the network and allows the more important resources to complete sooner.

Between Useful and Usable

It’s often clear which tags need to be loaded early and which can be delayed but there’s a grey area between the page starting to render and the page becoming usable.

And as yet, I’ve not developed a clear approach on how to handle the tags that fit into this section.

Often I’m guided by whether the tag provides content the visitor sees, for example I’ll include the tag for a reviews service just before the point in the page where the reviews appear. Inserting it earlier than that may delay more important content, but adding it later can result in the page reflowing once that tag has loaded.

Tags that don’t provide content – analytics, attribution, remarketing etc. – are a bit more tricky.

A TagMan study from several years ago demonstrated that the later a tag was fired, the greater the risk of data loss as visitors abandoned the page before the tag had fired.

These types of tag are ideal candidates for server-side tag management where only one tag needs to be fired, and the server-side code can distribute out the data further (clean up PII etc on the way).

But overall, the faster a page is, the less data loss there’s going to be.

Cut Connection Delays

The last area I explore is whether some of the delays caused by creating new network connections can be reduced.

Preconnect Resource Hints are commonly added via a HTTP headers, or the directly in the page using link element:

<link rel=”preconnect” href=”https://www.example.com”>

By default browsers wait until they’re about to request a resource before they make a connection to a server (assuming one doesn’t already exist) and making this connection ahead of time can bring forward the download of a resource.

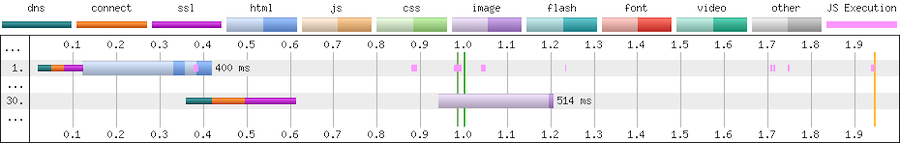

Without preconnect – image download starts at ~1.55s

Without preconnect – image download starts at ~1.55s

With preconnect – image download starts at ~0.95s

With preconnect – image download starts at ~0.95s

Preconnects are cheap but they’re not free (creating a HTTPS connection consumes bandwidth in the certificate exchange) so don’t overuse them.

For tags later in the page, you can use a tag manager to inject preconnect directives at an appropriate point – for example if a tag is being injected using the Window Loaded trigger, I’ll experiment with injecting the preconnect using DOM Ready trigger.

Not every domain needs a preconnect and if you find the need to preconnect to many domains then you’re probably using too many tags.

Taming Tags Delivers Wins

When he was at The Daily Telegraph, Gareth Clubb wrote about the approach they adopted and the experience they had reducing the impact of third-party tags.

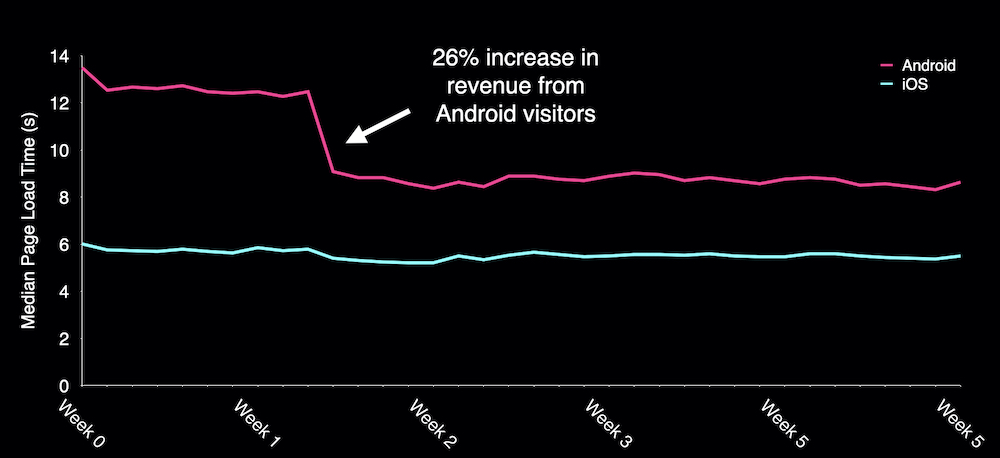

Several years ago I was working with a UK fashion retailer, and we found that one of their 3rd-party tags was slowing down visitors who used Android phones by around four seconds. The retailer decided to disable this tag for those visitors and saw a 26% increase in revenue from them.

Encouraged by this early gain the retailer went on to make improvements right across their site and reduced the median load time for Android visitors from over 14 seconds to under 6.

What about OPI?

To see what gains OPI could make if they improved the implementation of their third-party tags I used a Cloudflare Worker as proxy to rewrite the page and tested the changes with WebPageTest.

Consolidating and choreographing just a few 3rd-party tags reduced the delay before the product image appeared by one second, and there’s still plenty of opportunity for further improvements to both the base page, and their tag implementation.

Summary

Although I’ve described a sequential process, in reality I adopt a ‘pick and mix’ approach.

Persuading clients to implement ‘quick wins’ such as replacing the YouTube player, or delaying the load of chat and feedback widgets early on in an engagement is a great way of kickstarting an overall performance improvement process.

And like many things performance related, even small incremental improvements soon add up to make a larger difference.

Next time you’re thinking about the impact third-party tags are having on site-speed keep these five principles in mind:

- Catalogue the tags that are being served to your visitors

- Consolidate to remove expired and unused tags, reduce duplication and ensure tags are only included on the pages they are used on

- Reduce the cost of tags by adopting lightweight alternatives, slimming down testing frameworks and Tag Managers. Self-host libraries instead of fetching them from public CDNs.

- Choreograph when tags are loaded so that the most important content gets shown to your visitors sooner

- Cut delays caused by connecting to tag domains

They’re not an exhaustive list of all the things you should consider when managing tags but they’ll help you move in the right direction.

And if you’d like help taming your third-party tags, or generally improving the speed of your site feel free to Get In Touch.

Further Reading

Reducing the Speed Impact of Third-Party Tags (slides)

Measuring the Impact of 3rd-Party Tags With WebPageTest

Adding controls to Google Tag Manager, Barry Pollard

Exploring Site Speed Optimisations With WebPageTest and Cloudflare Workers

Fast Fashion… How Missguided revolutionised their approach to site performance

Google Optimize Anti-flicker Snippet Delay Test, Simo Ahava

How we shaved 1.7 seconds off casper.com by self-hosting Optimizely

Content Delivery Networks (CDNs) and Optimizely

Self-hosting third-party resources: the good, the bad and the ugly

Improving third-party web performance at The Telegraph