So Google, a group of publishers and others have launched Accelerated Mobile Pages (AMP)

AMP promotes the goal of a faster mobile web which is something I think we’d all like to see.

If you visit g.co/ampdemo on a mobile, and search for ‘Obama’ there’s no doubt the stories in the carousel come up fast, and moving between them is slick too. The demo isn’t available in all regions yet so Addy Osmani posted a demo to YouTube.

Similar to Google’s Instant Pages, AMP relies on pre-rendering and caching to make the pages load instantly.

Where AMP differs from Instant Pages is that it forces developers to use custom elements for images, audio and video, and limits some of the other web features a page can use (only AMP supplied JS, limited CSS features and with exception of button no form elements etc.).

There’s plenty in the documentation about what technologies are allowed or not allowed, but there’s less information on why these choices were made.

And I wonder how much this lack of information and the project’s relative immaturity, coupled with being backed by Google contributes to the unease we feel with it at the moment.

Tim Kadlec has already discussed whether the incentives publishers have to adopt AMP conflict with an open web and I’d really recommend reading his post.

After a few days of testing AMP based pages, reading the GitHub Repo and other docs it’s clear AMP aims to be a set of custom elements and rules to enable pages to be pre-rendered quickly and easily – a set of constraints to protect us from our own excesses.

Some of the AMP components such as img, audio and video, are replacements for their HTML equivalents but control asset loading more finely (and of course dispense with optimisations like the browser pre-loader).

Others provide new features such as carousels or wrap 3rd party services. Let’s face it there are plenty of poorly implemented carousels and 3rd party scripts out there so applying an external quality control over them is welcome.

But does AMP really deliver in performance terms?

Is it really faster?

The AMP Project reports speed improvements of 15-85% (using SpeedIndex as their measure), but as we don’t have their data or methodology it’s unclear how much these improvements rely on pre-rendering and caching.

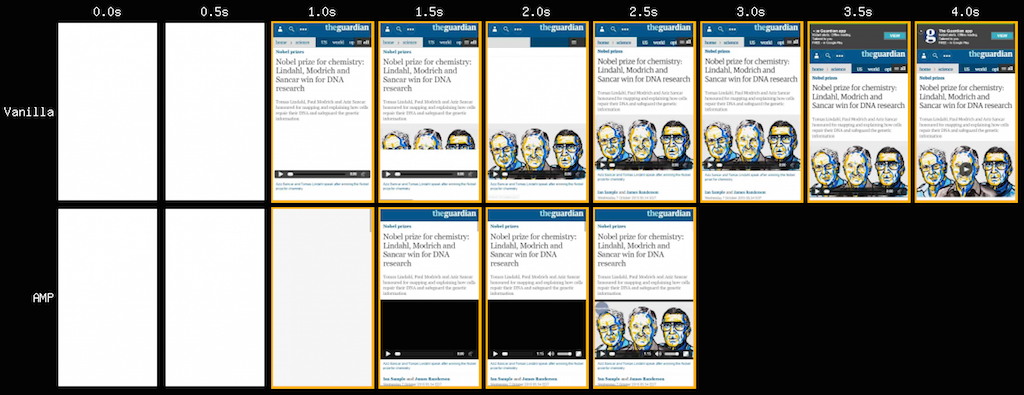

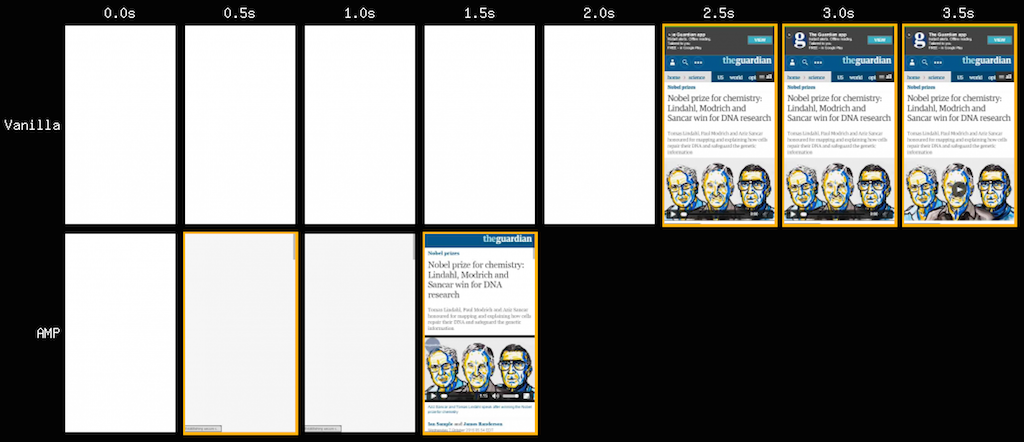

Testing AMP pages (without the benefit of pre-rendering) vs their existing equivalents shows something of a mixed picture.

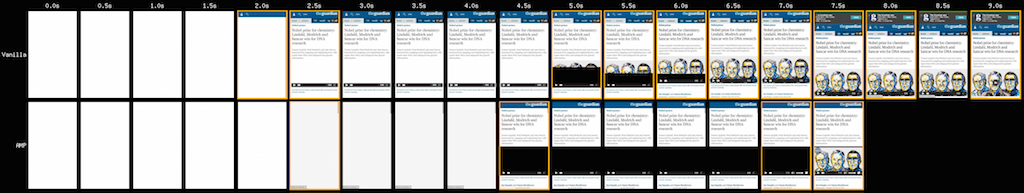

- The Guardian

(The top set of images is the current Guardian site, and the bottom the AMP equivalent)

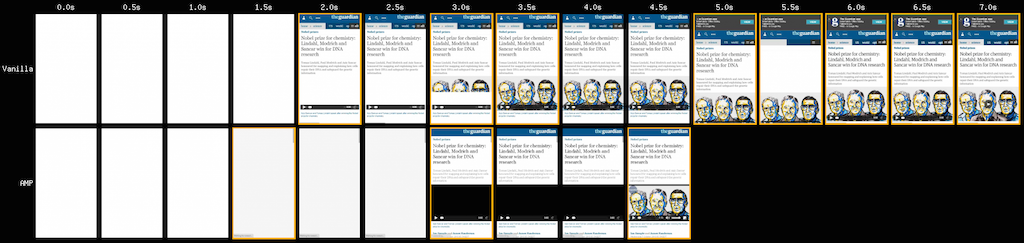

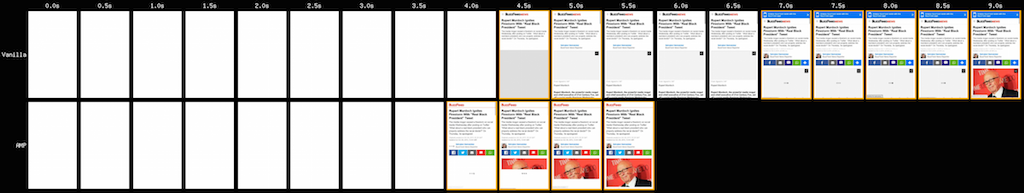

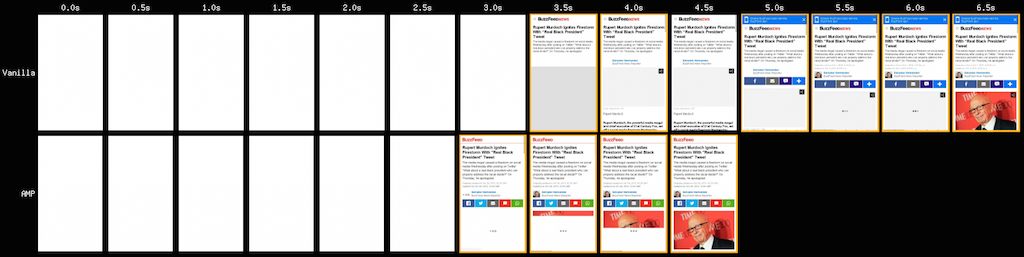

- BuzzFeed

(The top set of images is the current Buzzfeed site, and the bottom the AMP equivalent)

In the BuzzFeed examples the AMP version is generally faster with the exception of the test that involved no network shaping.

The picture for The Guardian is reversed, over slower networks the current site starts rendering sooner but generally always finishes after the AMP version. The exception is the test that involved no network shaping where the AMP version is noticeably faster.

The current Guardian site makes over 100 HTTP requests per page, and the AMP version only 9. The effort The Guardian's developers put into performance clearly is reflected in the results.

Note: The current Guardian site uses HTTP, whereas the AMP version uses HTTPS which will affect the result with some TLS overhead.

What about progressive enhancement?

From a browser support perspective the pages work in every browser I’ve tried - Chrome on Android, Safari on iOS and even Opera Mini (with a few minor quirks).

One thing you might notice from many of the AMP tests is that with the exception of media there’s little progressive rendering; the content just appears to pop onto the page, this is by design…

The head of each page contains a style block that sets the whole page to be transparent

<style>body {opacity: 0}</style><noscript><style>body {opacity: 1}</style></noscript>And the page contents don’t become visible until the AMP script has executed

<script async src=“https://cdn.ampproject.org/v0.js”></script>So although the pages use an asynchronous script, if it fails for any reason then the visitor sees a blank screen.

It’s not completely clear whether AMP is designed to just to be a format for content embedded in apps or whether it’s designed for more general use replacing the plain old HTML versions of publishers pages too.

If it is just for embedding in apps then there’s always the option of bundling the scripts with the viewer which guards against failure and will improve render speeds, but I still have a nervousness about pages being so dependent on JavaScript for rendering (even when the blocking 3rd party scripts we use now fail, the browser will eventually allow the page to render).

And do we really want to rely on JavaScript for ‘plain old’ document rendering as Jeff Attwood notes JavaScript performance on Android isn’t really improving.

The componentisation of the web

Web components have been talked about for several years and perhaps by providing a set of well defined, quality controlled and performant (maybe) components AMP represents the real start of the componentisation of the web.

There are of course questions about how the range of components gets extended, who decides what’s acceptable and how we innovate in a ecosystem of tightly controlled components.

Would BBC News or The Guardian have been able to experiment, learning how to create flexible and fast experiences in an AMP-like environment?

The current constraints still allow some performance issues to slip through - The Atlantic demo is a 447KB page of which 347KB is fonts!

I’d also like to understand more about why AMP bypasses the pre-loader for its images, and whether some of the AMP decisions to lazy-load images could be incorporated directly into browsers.

Wrap up

After a few days of exploring, experimenting with and testing AMP I do understand more, but I’m not sure if I’m more comfortable with it.

I see cases where it offers some performance improvements but then there are others where it appears slower.

Despite the launch partners, AMP is still a developer preview and as the GitHub Issues list shows it's still early days.

I wonder if what AMP really does is remind us how we’ve failed to build a performant web… we know how to, but all too often we just choose not to (or lose the argument) and fill our sites with cruft that kills performance, and with it our visitors’ experience.

Perhaps it is time to press the reset button.

Only time will tell if AMP is that reset button…

Further Reading

Get AMP’d: Here’s what publishers need to know about Google’s new plan to speed up your website

Accelerated Mobile Pages Project, Backed By Google, Promises Faster Pages

The State of JavaScript on Android in 2015 is… poor

Example AMP Based Pages and their ‘vanilla’ equivalents

New York Times

The Guardian

BuzzFeed

The Atlantic